After setting everything up in server Google Tag Manager, I’ve realized that I’ve maxed out the container max capacity which is 200KB. I am not sure why there is such a low space available for server GTM. I understand that space restriction for web GTM, but not for server.

This is what’s taking up the space:

Traffic Source Tags

- Facebook: 21.4KB (facebook-tag/template.js at main · stape-io/facebook-tag · GitHub)

- TikTok: 14.8KB (tiktok-tag/template.js at master · stape-io/tiktok-tag · GitHub)

- Google: 16.9KB (gads-offline-conversion-tag/template.js at main · stape-io/gads-offline-conversion-tag · GitHub)

- Microsoft: 11.4KB (microsoft-ads-offline-conversion-tag/template.js at main · stape-io/microsoft-ads-offline-conversion-tag · GitHub)

- Taboola: 2.83KB (taboola-tag/template.js at main · stape-io/taboola-tag · GitHub)

- Outbrain: 3KB (outbrain-tag/template.js at master · stape-io/outbrain-tag · GitHub)

- Snapchat: 16KB (snapchat-tag/template.js at master · stape-io/snapchat-tag · GitHub)

- Reddit: 11KB (reddit-tag/template.js at main · stape-io/reddit-tag · GitHub)

- Pinterest: 13.3KB (pinterest-tag/template.js at master · stape-io/pinterest-tag · GitHub)

- Linkedin: 8.92KB (linkedin-tag/template.js at main · stape-io/linkedin-tag · GitHub)

- Twitter: 10.7KB (twitter-tag/template.js at master · stape-io/twitter-tag · GitHub)

TOTAL = 130.25KB

Other Tags

- Data Client: 18.7KB (data-client/template.js at main · stape-io/data-client · GitHub)

- Firestore Writer: 2KB (firestore-writer-tag/template.js at master · stape-io/firestore-writer-tag · GitHub)

- Logger: 2KB (logger-tag/template.js at master · stape-io/logger-tag · GitHub)

TOTAL = 22.7KB

Variables

- Advanced Lookup Table: 2KB x 13 variables = 26KB (advanced-lookup-table-variable/template.js at main · stape-io/advanced-lookup-table-variable · GitHub)

- Array Builder: 2KB (GitHub - stape-io/array-builder-variable)

- Array Map: 1KB x 7 variables = 7KB (GitHub - Sogody/array-map-gtm-template)

- Constant: 0.5KB x 9 variables = 4.5KB (native variable, not sure about the size)

- Event Data: 0.5KB x 40 variables = 20KB (native variable, not sure about the size)

- Firestore Lookup: 0.5KB x 35 variables = 17.5KB (native variable, not sure about the size)

- Lookup Table: 1KB x 38 variables = 38KB (native variable, not sure about the size)

- Object Builder: 1KB (GitHub - stape-io/object-builder-variable: Object builder variable for Google Tag Manager Server Side)

- Split String: 1KB x 2 variables = 2KB (GitHub - gtm-templates-knowit-experience/sgtm-split-string-variable: Variable Template (Server) for Google Tag Manager for splitting a string. You can split by first, last or Nth occurrence. Result can be the split result itself, complete or limited string returned.)

TOTAL = 118KB

So if you sum all up, it goes beyond 200KB (270.95KB).

I am not sure about the space size the native variables take, but pretty sure is something around that size. And if you ask, yes, all these tags & variables are needed for the system to work. It is a complex system with multi-domain, click ID mapping, object & array mapping, webhooks, firestore and so on.

Taking into account that it is very rare that Google will increase the server GTM in the near future (although already submitted the feedback), the question is…what should I do in this situation?

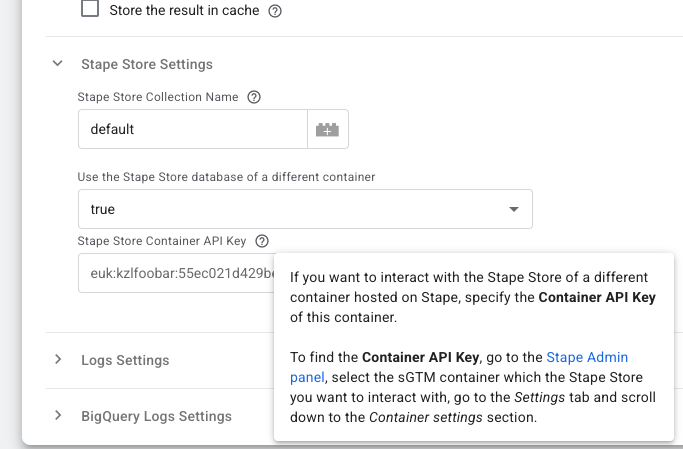

Should I create a “main container” with some Traffic Source Tags and then a “secondary container” with the other Traffic Source Tags? That will imply having 2 different server GTM URLs to send data from webhooks. And maintaining 2 different containers with a lot of common variables.